The setup

Last week, I went to the conference Tech in Asia Jakarta 2016. Digressing… This URL will not be relevant for the 2016 content next year. It is a weak URL, already rusty from the start. A good way to fix this is to set a temporary redirection from http://events.techinasia.com/jakarta/ to http://events.techinasia.com/jakarta/2016/ so people have the right link in their bookmarks. Once the conference is finished, you can start redirecting to 2017.

I have been put in touch with David Corbin by Sandra Persing and Dietrich Ayala for talking about Web Compatibility on the Developer stage. The conference has a strong marketing and product placement agenda. This is not the usual crowd I venture to, but it's always interesting to have a different view on how people conceive and foresee the Web.

But we know for a long time Web Compatibility is about participating.

Jakarta

Jakarta is a vibrant city which is bustling to the sounds of motorbikes. Buildings are growing everywhere. The city is being modernized at a fast pace and like in many other cities going through these transformations, it is socially and ecologically violent. The Guardian has this week a full live coverage of Jakarta. The street food is amazing and quality coffee places are not hard to find. The population is young. This participates to the dynamism of the city and the startup ecosystem.

Web Compatibility talk

First talk of the morning, I was not expecting that much, but wow what a crowd. Ratri Chibi introduced me and I tried to gave an overview about Web compatibility in 25 minutes.

The context

For my talk I usually try to connect to something the audience can relate to. This time I have used in most of my slides the Indonesian masks culture (topeng). Barong is a good spirit (on the left), while Rangda is a demon (on the right). The Web compatibility work is very much a battle in between the good and evil ways of doing things. But we all have to remember that like in the world of spirits, the daily reality is a lot more complex than just being right or wrong. Rangda is an evil force and… a protective force at the same time.

What do we mean when we talk about the Web? The Web is a space where a person should be able to use whatever device and browser of their choice to access and interact with the content. The Web has been instrumental in making information cheaply accessible anywhere at anytime everywhere on earth. It enlarged the ability of individuals to publish content.

A couple of years ago, at Mozilla, we tested the top 1000 Web sites in Japan and China on Firefox for Android. We quickly realized that around 20% of these sites were broken in some ways to the point of being completely unusable. The common issues were related to WebKit properties (CSS and DOM) such as old flexbox, gradient, transition, background-size, etc. Sometimes sites were relying on old non-standard properties implemented by other browsers but not Firefox such as innerText (the standard keyword is textContent). In this image of the Mobage site, we clearly the sign of flexbox first generation.

It leads to the fragmentation of the Web and condemn people to use specific devices when they can afford it. It is also a vicious circle. Any reasonable Web agencies or IT departments before fixing a bug look at their browser market shares. If a specific browser is not visible in their analytics, they decide it's not important to support it and not worth spending time on fixing the issues. Contacting the companies for outreach to get the sites fixed becomes a seduction game. In cases where the site is totally unusable by design leads to an obvious zero marketshare. It also becomes very difficult to convince the company that they will get customers if they fix the bug. And last but not least, if too many sites are broken, it becomes a lot harder to make distribution deals with devices companies, which in return entrenches the low marketshare.

How do we escape from this ouroboros? When the effort on HTML restarted, some design principles have been established including the priority of constituencies

In case of conflict, consider users over authors over implementors over specifiers over theoretical purity. In other words costs or difficulties to the user should be given more weight than costs to authors; which in turn should be given more weight than costs to implementors; which should be given more weight than costs to authors of the spec itself, which should be given more weight than those proposing changes for theoretical reasons alone. Of course, it is preferred to make things better for multiple constituencies at once.

It says that the people using the Web for reading and publishing are the most important in the ecosystem. Everything should be done so they have a smooth Web experience. Sometimes as a Web developer, you might want to choose a less fancy solution so more people can use the service. As a browser implementor, you need to implement non-standard properties so the sites will be working on people's browsers.

Some Web Compatibility issues

HTTP

Web compatibility issues are not only about CSS and DOM. Sometimes they are deep into the user experience and the way we use the protocols. A couple of years most Web sites had dedicated domain names for desktop computers and mobile devices. To help the user get the right experience, they used user agent sniffing (user agent detection mechanism). Each browser sports a HTTP User-Agent string which is sent with every request and identifies the browser. So sites are redirecting the device based on the user agent string to the www. or m. domains. Algorithms and databases of user agent strings help Web developers to make the right choice but for one issue: only the past is known. A new user agent string on the market will be unknown and then will not be recognized by these algorithms, sending the user to the wrong site. It's why I often say that user agent sniffing is a future fail strategy. It works only if you intend to keep a very high maintenance of every possible browser/device coming into the market before it actually hits the market. Mission impossible.

HTTP Ping Pong is happening when different detection logics are applied for the mobile and desktop site. One is saying, I know your user-agent string and you are a mobile device, I'm sending you to m. and the mobile site in return says I don't know you user agent string, you must be a desktop computer, I'm sending you to www. which in return… etc. If everything is done through HTTP, the browser gives up after 10 hops. The user is punished and not able to read the content. If there's HTTP on one side, and JavaScript on the other side, here we can enter in very interesting scenario of infinite redirection loops. Nightmares ensue.

CSS

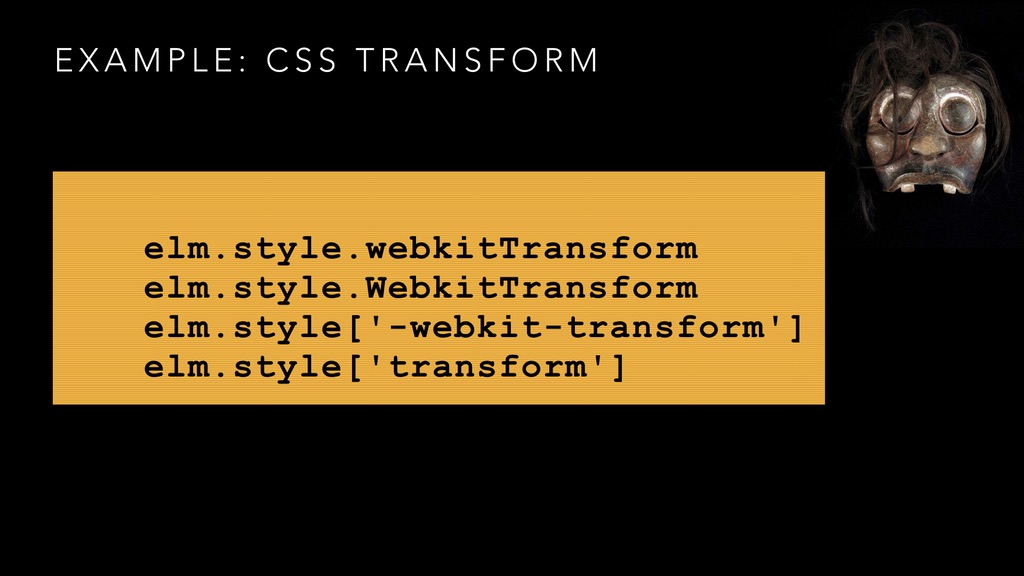

elm.style.webkitTransform

elm.style.WebkitTransform

elm.style['-webkit-transform']

elm.style['transform']

which one of these four properties is implemented in Edge (Microsoft) and Firefox (Mozilla). Our natural instinct would say the 4th one, which is the standard way to assign a transform to an element elm.style['transform']. But because of the wide spread of broken javascript and bogus libraries, the needs to be compatible with the Web forced us the others. It's the story of broken things on the Web, the story of our own failures to do the right things. So we patch our stories with ways so people can continue to follow along with us.

This kind of things led the core team of Firefox (working on Gecko) pushed by the web compatibility team to implement many aliases of WebKit. Some properties really need just an alias, but some are slightly more subtle, such as flexbox. The first implementation of flexbox is very different from the current one and websites using the first version only (aka for WebKit safari) breaks if we were just simply converting to modern flexbox.

You might think that we are rewarding the bad developers. That we should strive for quality and take a stand for doing the right (technical) thing. But what is right technical is not necessary right for the users. The screenshot in Firefox Android of the mobage website (above) is symptomatic of what we can see on the mobile Web in Japan. In 2014/2015, we identified that 20% of the Japanese sites were broken because of using -webkit- only CSS properties and WebKit JavaScript. We spent months, sometimes years contacting web sites without having them to change their site. So we had to bend our technical righteous side and implement some -webkit-. Previous RIP Opera Presto did the same. And Microsoft Edge. In the end the version on the right side in the screenshot is far more usable for the end users.

JavaScript and Legacy

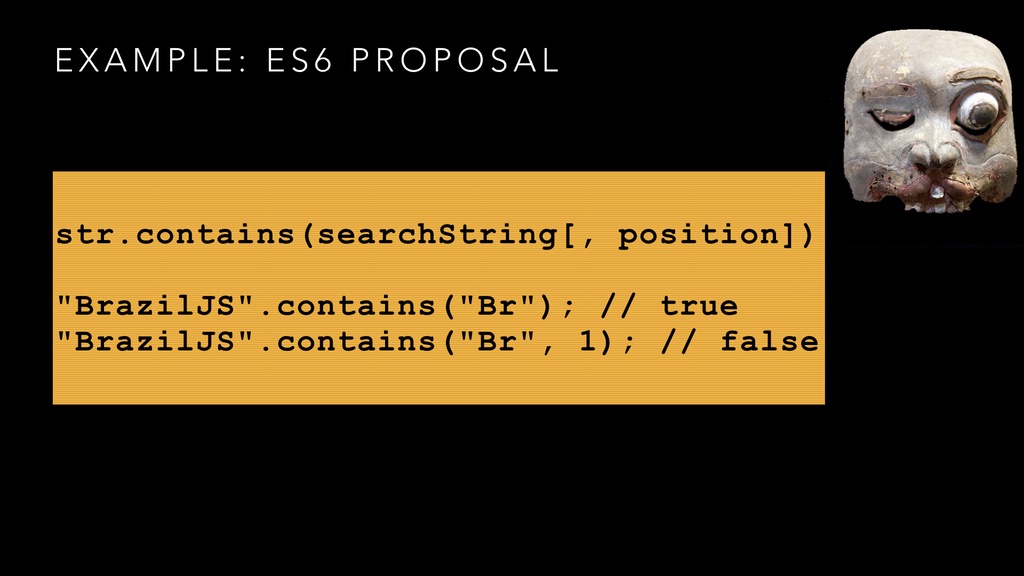

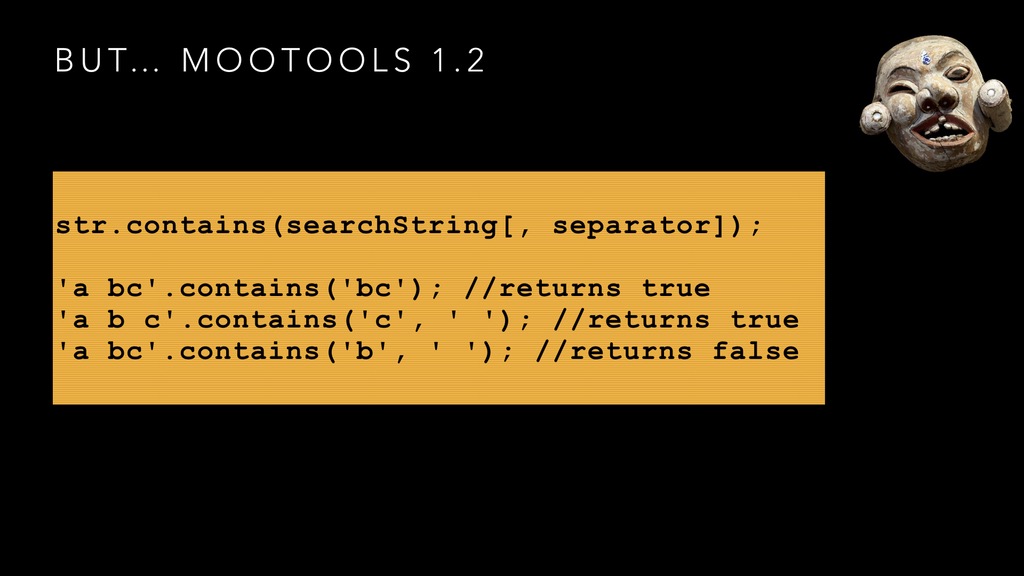

Issues with Web compatibility comes in many forms. They sometimes are just an exposure of the history and success of some JavaScript libraries. During the development of ES6, a new keyword has been proposed to finally be able to search a substring inside a string. contains was the most logical thing to do.

str.contains(searchString[, position])

until… (you know there is a recurrent joke here and that there will be an issue, but let's continue with it) Mozilla deployed the new feature in Firefox Nightly and broke many Web sites using Mootools 1.2. This well-known library had a similar keyword but not with the same exact semantics. Firefox had to back out this and remove the regression. Luckily enough this happened before productions releases.

The ES6 crew had to propose a new keyword: includes instead of contains. Sometimes we will discover Web compatibility issues only once we deploy a new feature and we realize that a well-known site or a successful libraries is relying on either a bug in the browser, or a similar feature.

Contacting Web sites

Why these issues happen in the first place? Why can't people just code according to the standards. It's a very hard question. The Web is not only a technical object. It's not just code out of a compiler. The Web exists because of a social-economical structure. It's a deeply social object. Asking why some sites are broken is like wondering why there is pollution and why people can't just individually do the right thing. If you have worked or are working in a Web agency as a Web developer. You know you have a boss (or a project manager) and that there are clients. You know that the taboo Friday deployment is still happening. That the budget is undervalued. That the timeline for delivering the project is too tight. That the browsers themselves have their own set of issues. So people make choices, cut corners and test only for a certain number of scenarios.

Usually we try to contact Web sites. We make an attempt at discovering who is in charge, but here again boss, client, Fridays, legacy Web sites, maintenance budget in oblivion.

Sometimes the issue are deeper and comes directly from the specification. When you read in a specification "Left to implementations" runs away from the feature. It means that the specification doesn't explain clearly the behavior of the feature and implementers will have to decide what they should do with the gap in description. That often leads to interoperability issues. Sometimes it's because web developers are using some features in "creative" ways that implementers would have never thought about. Any systems contain in itself ways of being exploited in unfathomable directions.

How To Do A Better Job?

There are ways to mitigating the issues. On webcompat.com, we developed the tool cssfixme, it is not perfect but it's a good way to understand how to fix your webkit only CSS. Better is to use tools such as postcss. They will take care for you of the incompatibilities and prefixing strategy. It helps you to focus on what matters. They do the hard work of salting for the browsers with appropriate prefixes.

Prefixes were a well intended idea—remember what I said about systems—but because of the socio-economical structure of the Web, they became a Achilles' heel for some browsers. A browser with very large market share can deploy a feature with a prefix and because Web developers don't want to wait before using it in production. It becomes a de-facto standard. That's really unfortunate, but a reality.

So browsers have decided a new policy and put new features behind settings to flip on and off. Most users do not change or even have access to these type of settings. So Web developers can't rely on them for the new production release of their site. At the same time, they can easily test the features by activating them. The caveat is that there will be less chances to discover issues, but it's a lot better for the browser ecosystem.

MDN is a treasures trove. This set of documentation hosted by Mozilla and written by the large Web developer community gives a lot of information about the Web features, including Web compatibility tables. And if an information is missing, just contribute. I would also specially recommend caniuse.com

If you find a bug in a browser you need to report it to browser vendors. This is essential. Too few Web developers report issues about Web browsers, and still they are the core of their daily usage. By reporting issues and bugs, you help browsers become better for your future work. The bugs you encounter in developing your own site are unique. Browser implementers can't imagine them or make them up.

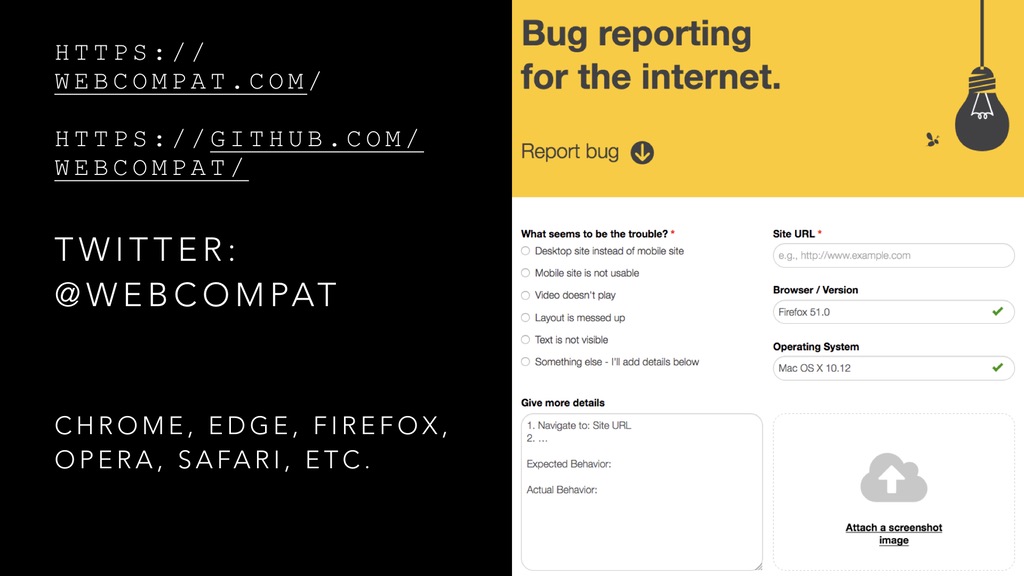

Finally if you notice a Web site as a user or a Web developer with different rendering or behavior in different browsers. Do not hesitate to report it to webcompat.com. Make sure to test in multiple browsers. Be careful about your addons, they often create differences in between browsers (such as adblockers).

If you have questions you can ask @webcompat or @mozwebcompat on twitter. You can also communicate on the publicly archived webcompat mailing list.

Otsukare!